The following video gives a visual demonstration of The Halting Problem, a highly influential result in computer science, proven by Alan Turing in 1936.

FAQ

Viewers of this video raise many questions and objections. I listed here the frequent ones:

1. Are you saying computers are inferior to humans?

2. Objection: H produces the correct output, but N negates it. It’s not H’s fault.

3. If N is causing all this trouble, why not take it out?

4. Objection: This is a paradox. Obviously a paradox cannot be solved.

5. Objection: It’s not H that is wrong, it is X that is wrong.

8. So can humans solve the halting problem?

9. Will it help if we allow H to print a third output, e.g., “sorry, can’t answer this one”?

10. How do you define “Everything”? Obviously nothing can do really everything.

11. What if we excuse H from handling blueprints that contain H itself?

Or you can read some more about the formal definitions behind this result.

1. Are you saying computers are inferior to humans?

No.

The video informally presents Alan Turing’s halting theorem from 1936. This is a highly influential result which plays an important role in computer science and in some branches of mathematics. It shows that some problems cannot be solved algorithmically (i.e., via computation).

The video makes no comment about humans. See more about humans in “So can humans solve the halting problem?”

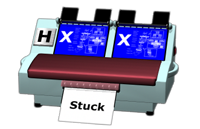

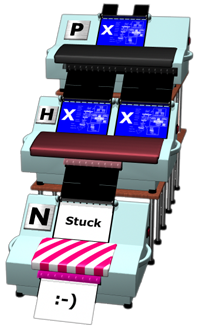

2. Objection: H produces the correct output, but N negates it. It's not H's fault.

Let’s take just H by itself, and feed it two blueprints of X. Let’s assume it says “stuck”:

3. If N is causing all this trouble, why not take it out?

Our goal is prove that H is not perfect, i.e., that there exists some inputs that cause it print the wrong answer. For this goal, it is enough to show one such input.

X is especially designed for this purpose. Its special construction is guaranteed to cause H to fail. It is true of course that if we take out the N, then H will not fail, but we are not interested in an example where H succeeds, we are interested in an example where H fails.

4. Objection: This is a paradox. Obviously a paradox cannot be solved.

The proof shown uses a standard proof-by-contradiction technique: We start with an assumption, reach a contradiction and hence our assumption must be wrong.

More specifically, we assume that H exists, and that it can correctly solve any valid input. From this we conclude that X exists, and that H can’t correctly solve the input (X,X). This contradicts our assumption, hence it’s proof that a perfect H can’t exist.

Some people continue to argue that X is a special kind of paradoxical machine, and that even a perfect H isn’t supposed to be able to handle such machines, because no one can. But there’s no such thing as ‘paradoxical machines’. Any machine is just a piece of hardware and software that performs some computation. This computation gets stuck or not, depending only on the input. A machine which by itself is paradoxical can’t really exist.

5. Objection: It's not H that is wrong, it is X that is wrong.

X is not designed to do anything useful. Some inputs cause it to get stuck, some cause it to print a smile. Both are pretty useless. Since it’s not attempting to achieve anything, X can neither be right, nor wrong.

The only reason we built X is as a test-case for H. H can supposedly tell us if X will get stuck or print a smile for a given input. What we show in the proof, is that when we feed H with the input (X,X) it causes H to print an answer that mismatches what really happens with X. Therefore it is H that is wrong.

6. Objection: H will get stuck. When it simulates X, it will start simulating H, which simulates H, which simulates H...

This is wrong for two reasons:

First, H should be able to handle endless simulations. Imagine that we feed H with a blueprint of A (the arithmetic machine) and a checkers board. As shown in the video, this causes A to get stuck. So when H simulates this case, the simulation will never end. But H has some clever means to detect that the simulation is not going to end, it stops simulating, and prints “stuck”. So if it’s true in the case of X that H’s simulation will be endless, then H should detect that, stop, and print “stuck”. This will cause N to print a smile, proving H wrong again.

But there’s a deeper reason why this is wrong, or at least irrelevant. The video mentions that H performs simulation, but this is not an important assumption. The only important assumption is that H solves the halting problem somehow. It can, for example, instead of relying on simulation, rely on static analysis of the input blueprint. E.g., it can search for certain patterns in the blueprint that indicate it’s going to get stuck. If it’s not performing simulation, then this endless recursion issue never rises.

What the proof in the video shows, is that no matter how we attempt to solve the halting problem, be it using simulation, static analysis, or a combination of both, we will surely fail.

7. Turing proved his theorem in 1936. Is it still relevant today when we have quantum computers and neural networks?

Yes.

Turing proved his theorem on “Turing Machines”, a mathematical model of a computer. This model is equivalent to all computing machines we have, from the dumbest, oldest chip, to the most powerful computers. Of course computers have greatly improved: they are much faster, smaller, have fancier I/O devices, and are easier to program. But that’s it. The basics of what they can achieve in principle hasn’t changed one bit. The most sophisticated machines that employ quantum physics or try to mimic the brain are still basically executing an algorithm that manipulates symbols, just like a Turing machine.

8. So can humans solve the halting problem?

10 n=2

20 n=n+2

30 if there exists two primes p1 and p2 such that n=p1+p2 goto 20

40 print “done”

What do you think, does this program halt? Fact is, no one knows. It is related to Goldbach’s conjecture: If this conjecture is true then this program never halts, and if it is false then it does halt. The best human minds have tried to answer this question, but so far they all failed.

Another interesting question is: can we apply the proof shown in the video to humans? I.e., if there’s some guy named Howard who claims he can solve the halting problem, can we build an X machine around Howard to prove him wrong?

This boils down to one question: when we draw the blueprint of X (which we must as a step in the proof), can we draw a full blueprint of Howard’s brain? One that fully defines Howard, and allows us to simulate him?

This is a controversial question. It has three possible answers:

- Yes we can. This means a brain is basically a computer (=a Turing machine, see question above), so of course we can’t solve the halting problem. A lot of scientists will agree with this answer.

- No, the physics of the brain is too complicated to be drawn on a finite sheet of paper. This means the brain might be more powerful than a computer, and might in principle be able to solve the halting problem. A lot of scientists will find this reasonable too.

- No, the brain has a spiritual side, that cannot be drawn at all. This also means brains may be more powerful than computers. While this answer will make scientists a bit uneasy, we can’t rule it out.

9. Will it help if we allow H to print a third output, e.g., "sorry, can't answer this one"?

No.

To see why, here’s again a definition of the halting problem, a bit more formal this time:

The Halting Problem

Input: A description (blueprint) of a machine M and some input for it d.

Output:

“not stuck” – if M runs a finite amount of time on d.

“stuck” – otherwise.

As you can see, by definition we have only two possible outputs. So any machine that solves this problem will have two outputs. If it has more, then already it deviates from the problem’s definition.

Some people argue still that X is a special case due to some paradoxical nature of its construction. But note that if X really existed then it would have been just some collection of logic circuits or software instructions. There’s nothing really special about it. If we fed it with input, it would have processed it. Now this processing can end in a finite amount of time (=not stuck), or otherwise, it’s stuck. Only two outcomes are possible, just like any other machine.

10. How do you define "Everything"? Obviously nothing can do really everything.

By “everything” we mean every problem we’d expect a computer should be able to solve.

More formally, let’s look at the family of yes/no problems. Here are a few examples:

- Is a given number M a prime?

- Is a given string S a palindrome? (reads the same backward and forward, like “racecar”)

- Is a given text T a valid Java program?

We say a problem is “well defined” if for some specified domain every input instance has one correct yes or no answer. The problem “is a given number M a prime?” is a well defined problem over the domain of numbers. That is, for every number M there’s a clearly defined answer yes or no. In fact all the problems shown above are well defined. An example of a problem that is not well defined would be “Is a given story T interesting?” Of course we have no objective measure to decide if T is interesting or not.

So let’s focus only on well defined problems. We’d expect computers should be able to solve all of them, right? If there always exist a correct answer, then why can’t our computers compute it for us?

This is what they thought in the beginning of the 20th century. It was Kurt Gödel who first disproved it in 1931 with his incompleteness theorems. Alan Turing’s result from 1936 showed an analogous result using the halting problem.

The halting problem is well defined. Every machine M, when fed with some input d, either processes d in finite time, and otherwise it takes infinite time (“not stuck” and “stuck” in the video’s terminology). And yet, as the video shows, computers can’t solve it. Such a problem is called “undecidable”.

11. What if we excuse H from handling blueprints that contain H itself?

First, note that many other programs have no problem handing themselves. Compilers, for example, can compile their own code. Various compression utilities can compress their own source code, or binary files.

But even if we do make an exception in this case, and excuse H from handling blueprints that contain itself, that won’t help. This is because we can easily camouflage H, and hide it inside X.

For example, we can create H1 by adding various harmless tweaks to H. Say H contains the following instruction:

X=X+1

So we’ll replace it with:

X=X+2

X=X-1

So now H1 is not identical to H, but it’s equivalent: it behaves the same way. An X1 machine that contains H1 will still cause the original H machine to fail.

And we can go even further. We can create H2 that is identical to H except instead of “stuck” it prints a random even number, and instead of “not stuck” it prints a random odd number. H2 is not even equivalent to H. But an X2 machine that contains H2 (with the required modifications to N), will still cause H to fail.

If we’ll take X2 and add some additional harmless tweaks we’ll end up with a machine that it’s impossible to see it is in any way related to H. And it would still cause H to fail.